Built for anyone who wants to move fast. Teams, products, agents. Real-time speech, vision, worlds and conversations at any scale. From one user to millions, without the complexity. Up to 20× more cost-efficient than what came before.

Copy-paste simplicity that gets you moving fast. Dive in with confidence, build without friction and scale your experience as far as your imagination reaches.

Experiment or scale. Individual or enterprise. We only bill for real usage and we’ve got you covered from day one.

Ojin is built on years of experience in world-class cloud streaming. We specialise in hosting and optimising models for real-time use cases, achieving latencies low enough to feel truly interactive, thanks to Ojin’s globally distributed inference cloud.

Ojin runs real-time inference where it’s cheapest and fastest automatically.

Our hybrid-cloud infrastructure finds the optimal GPU in real time and passes the savings on through transparent, usage-based pricing. Sign up now and start with $10 in free credits.

Thanks to our API-first approach, everything you do on Ojin can be automated and deployed at scale. The Ojin Model API is a simple, framework-agnostic HTTP/WebSocket endpoint that can be used with Pipecat, LiveKit Agents, or any custom solution.

We’re built on trust. Built with strict compliance standards, data privacy controls and robust safeguards protecting your information at every layer.

European AI regulatory framework

German-based company, DPA available

Saudi Arabia’s Personal Data Protection Law

Secure user management

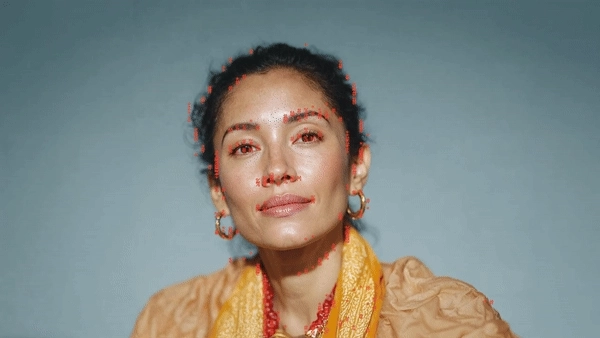

What our customers say

Built on top of our Model API, our Agent API enables you to build and deploy conversational AI agents and embed them anywhere.

Ship blazing-fast real-time applications. We can’t wait to see what you build.